Role

Data Team Lead

Industry

Transportation

Duration

3 months

Transportation App User Data ETL

Project Overview

Designed and implemented a complete ETL (Extract, Transform, Load) data pipeline for a pre-Series A startup, enabling data-driven product decisions that increased signup conversion by 30% and improved user retention.

Business Results

30% increase in signup conversion through data-driven onboarding optimization

$20,000 annual savings compared to enterprise analytics solutions

50% increase in user registrations through optimized flows

30% reduction in meeting time through focused, data-informed discussions

Organizational Transformation

Cultural Shift: Transformed entire organization from opinion-based to data-driven decision making

Democratized Access: Enabled every team member to independently explore data insights

Unified Infrastructure: Created company's first centralized data system

Challenge & Strategic Solution

Starting Point: "Can you show us our user data?"

When leadership asked for user analytics, I realized we had a fundamental problem: no data infrastructure existed. As the sole technical team member, I needed to build everything from scratch.

Core Challenges

Limited Budget: Enterprise solutions like Amplitude ($20K+/year) exceeded our budget

No Infrastructure: Zero existing data collection or storage systems

Cross-functional Needs: Planning, Marketing, and Engineering teams needed different insights

Technical Constraints: Had to learn ETL processes while building them

Strategic Technology Selection

Rather than expensive enterprise tools, I chose cost-effective, scalable solutions:

Google Firebase: Free-tier event logging with real-time capabilities

BigQuery: Pay-per-use data warehousing with powerful SQL capabilities

Google Data Studio: Free visualization platform with seamless integration

Python: Custom data processing scripts for complex transformations

Technical Implementation

ETL Pipeline Architecture

Extract Layer:

Mobile App → Firebase Events → Raw Event StorageWeb App → Firebase Analytics → Data Validation Layer

Transform Layer:

Data Cleaning: Standardized event formats, removed duplicates

Validation Process: Cross-referenced logged events with actual user behavior

Quality Checks: Automated tests to catch data integrity issues early

Load Layer:

Cleaned Data → BigQuery Tables → Data Studio Dashboards → Python Analytics → Stakeholder Reports

Key Technical Decisions

1. Event Tracking Strategy Designed comprehensive logging for:

User registration funnel (each step individually tracked)

Feature adoption patterns (daily/weekly/monthly usage)

Session analytics (duration, activity, drop-off points)

Error tracking (crashes, failed actions, performance issues)

2. Data Quality Assurance

Raw Data Preservation: Stored unprocessed events in BigQuery for audit trails

Validation Pipeline: Built automated checks comparing logged vs. actual user behavior

Discrepancy Alerts: Set up monitoring to catch data integrity issues immediately

Technical Implementation & Team Collaboration

Data Infrastructure Design

Analytics Stack Architecture:

Mobile/Web App → Google Analytics 4 → BigQuery Data Warehouse → Data Studio Dashboards

My Role in Technical Implementation:

Data Requirements Definition: Specified event tracking schema and KPI measurement frameworks

Analytics Strategy: Designed data collection approach balancing business needs with technical constraints

Cross-functional Collaboration: Worked with development team to implement tracking specifications

Quality Assurance Partnership: Collaborated with development and QA teams to ensure data governance and accuracy

Data Governance & Quality Framework

Data Quality Challenges & Solutions:

Problem: Inconsistencies between logged events and actual user behavior

My Approach: Established data validation standards and worked with development team to implement dual-verification processes

Collaboration: Led cross-team efforts to define data accuracy requirements and validation procedures

Outcome: Significantly improved data reliability for business decision-making

Performance Optimization Strategy:

Challenge: Dashboard loading times affecting stakeholder adoption

Analysis: Evaluated trade-offs between real-time data updates vs. pre-aggregated performance

Solution Design: Collaborated with technical team to implement optimized data refresh strategies

Result: Substantially improved dashboard responsiveness and user experience

Data-Driven Cultural Transformation

Stakeholder-Specific Dashboards

Executive Dashboard

MAU/DAU trends and growth metrics

Revenue attribution and conversion funnels

High-level KPIs with drill-down capabilities

Product Dashboard

Feature adoption rates and user journey analysis

A/B test results and statistical significance tracking

User cohort behavior and retention metrics

Cultural Impact: The "Aha!" Moment

Before: Weekly meetings were 2-hour opinion battles

"I think users don't like this feature"

"My gut says we should prioritize X"

Decisions based on loudest voice or highest authority

After: 45-minute data-informed discussions

"Funnel analysis shows 40% drop-off at permission screen"

"A/B test indicates 23% improvement with new onboarding flow"

Decisions backed by statistical evidence

Key Transformation: Non-technical team members began independently exploring dashboards, asking data-informed questions, and proposing hypothesis-driven experiments.

Data Analysis & Strategic Insights

Analytics Infrastructure Setup

Data Flow: GA4 → BigQuery → Data Studio → Business Insights

Analysis Approach: Real-time dashboard monitoring combined with deep-dive funnel analysis

Stakeholder Access: Self-service analytics enabling cross-team data exploration

Critical Business Insight Discovery

Hypothesis-Driven Analysis Process:

1. Data Observation Through BigQuery funnel analysis, I identified a critical drop-off pattern:

40% user abandonment at the location permission request screen during signup

This represented the highest single point of friction in our conversion funnel

2. Strategic Hypothesis Formation

Current State: Requesting location permission upfront during signup

Hypothesis: "Moving high-friction requests after user value demonstration will improve conversion"

Reasoning: Users need to understand service value before committing to sensitive permissions

3. Solution Design & Testing

Proposed Change: Delay location permission request until users access location-based features

Implementation Strategy: A/B test with conversion tracking via BigQuery

Success Metrics: Registration completion rate, permission grant rate, first-session retention

4. Data-Validated Results

~30% increase in registration completion rates

Higher permission grant rates when requested contextually

Improved user experience through reduced initial friction

Data-Driven Decision Making Framework

Business Question Translation:

Marketing asks: "Why are signups dropping?"

Data Question: "Where in our funnel do users abandon, and what user behaviors precede drop-off?"

Analysis Method: Multi-step funnel analysis with cohort comparison

Insight to Action Process:

Pattern Recognition: Used BigQuery to identify behavioral patterns

Hypothesis Formation: Developed testable assumptions about user motivation

Solution Design: Proposed UX changes based on data insights

Impact Measurement: Tracked before/after metrics to validate improvements

Technical Data Skills Applied

Analytics Architecture:

Designed event tracking schema for comprehensive user journey analysis

Built BigQuery data models supporting both real-time and historical analysis

Created Data Studio dashboards enabling stakeholder self-service analytics

Business Intelligence:

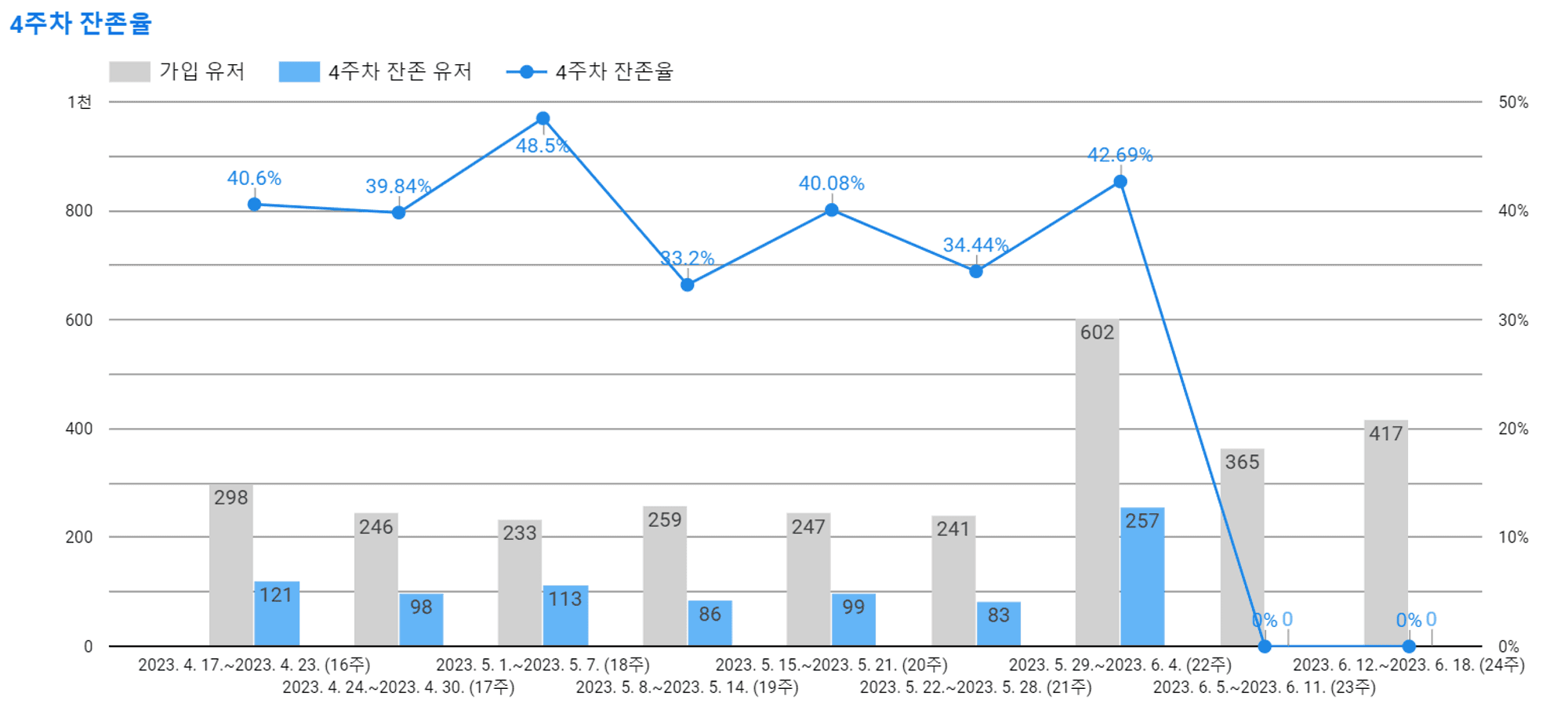

Performed cohort analysis to understand user retention patterns

Conducted funnel analysis to identify conversion bottlenecks

Established KPI frameworks connecting user behavior to business outcomes

Key Learnings

Technical Growth

1. ETL Design Principles

Learning: Raw data preservation is crucial for debugging and reprocessing

Application: Always maintain audit trails and version control for data transformations

2. Scalable Architecture Decisions

Learning: Over-engineering early wastes time, but planning for scale prevents rewrites

Application: Started simple with Firebase, designed for BigQuery scalability

Business Impact Understanding

3. Data Infrastructure as Strategic Asset

Learning: Proper data collection becomes more valuable over time

Application: Invested 20% extra effort in data quality to unlock long-term insights

4. Cultural Change Through Accessibility

Learning: Beautiful dashboards don't create data culture - easy access to actionable insights does

Application: Prioritized stakeholder training and self-service capabilities over advanced features

Cross-Functional Collaboration

5. Understanding Diverse Analytics Needs

Learning: Each team needs different data granularity and update frequencies

Application: Designed flexible data models supporting multiple use cases

Tools & Technologies

Data Collection: Google Firebase Analytics & EventsData Storage: Google BigQuery (data warehouse)Data Processing: Python (pandas, numpy), SQLVisualization: Google Data Studio, Custom Python dashboardsInfrastructure: Google Cloud Platform